Solution of non-linear equations. After each iteration the program should check to see if the convergence. A MATLAB function for the Newton-Raphson method. Newton-Raphson Method MATLAB Program. Newton-Raphson Method MATLAB Program: Source code for Newton's method in MATLAB with mathematical derivation & numerical. Math 111: MATLAB Assignment 2: Newton's Method. Due Date: April 24, 2008. Once you have saved this program, for example as newton.m, typing the filename.

Newton's method - Wikipedia, the free encyclopedia. In numerical analysis, Newton's method (also known as the Newton. If the function satisfies the assumptions made in the derivation of the formula and the initial guess is close, then a better approximation x.

- Search now for MATLAB jobs and Simulink jobs. Need a Newton-Raphson Solver From.

- Newton Raphson Iteration method in Matlab. Newton-Raphson iterative method when derivative is $0$? Runge Kutta Method Matlab code.

- Newton's method, also called the Newton-Raphson. A.; and Vetterling, W.

- Newton-Raphson Method for Solving Nonlinear Equations. Holistic Numerical Methods. Transforming Numerical Methods Education for the STEM Undergraduate : Home.

- You don't need to go to all the trouble of using Newton Raphson to solve this.

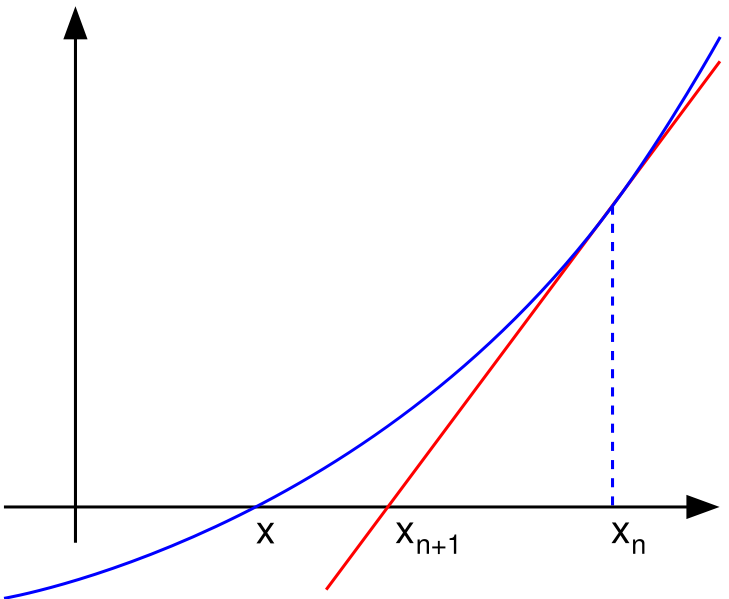

The method can also be extended to complex functions and to systems of equations. Description. We see that xn+1 is a better approximation than xn for the root x of the function f. The idea of the method is as follows: one starts with an initial guess which is reasonably close to the true root, then the function is approximated by its tangent line (which can be computed using the tools of calculus), and one computes the x- intercept of this tangent line (which is easily done with elementary algebra). This x- intercept will typically be a better approximation to the function's root than the original guess, and the method can be iterated. Suppose . The formula for converging on the root can be easily derived. Suppose we have some current approximation xn.

Then we can derive the formula for a better approximation, xn+1 by referring to the diagram on the right. The equation of the tangent line to the curve y = . In other words, setting y to zero and x to xn+1 gives. But, in the absence of any intuition about where the zero might lie, a .

Furthermore, for a zero of multiplicity 1, the convergence is at least quadratic (see rate of convergence) in a neighbourhood of the zero, which intuitively means that the number of correct digits roughly at least doubles in every step. More details can be found in the analysis section below. The Householder's methods are similar but have higher order for even faster convergence.

However, the extra computations required for each step can slow down the overall performance relative to Newton's method, particularly if f or its derivatives are computationally expensive to evaluate. History. However, his method differs substantially from the modern method given above: Newton applies the method only to polynomials. He does not compute the successive approximations xn. Finally, Newton views the method as purely algebraic and makes no mention of the connection with calculus.

Newton may have derived his method from a similar but less precise method by Vieta. The essence of Vieta's method can be found in the work of the Persian mathematician. Sharaf al- Din al- Tusi, while his successor Jamsh.

A special case of Newton's method for calculating square roots was known much earlier and is often called the Babylonian method. Newton's method was used by 1.

Japanese mathematician Seki K. In 1. 69. 0, Joseph Raphson published a simplified description in Analysis aequationum universalis. Raphson again viewed Newton's method purely as an algebraic method and restricted its use to polynomials, but he describes the method in terms of the successive approximations xn instead of the more complicated sequence of polynomials used by Newton. Finally, in 1. 74. Thomas Simpson described Newton's method as an iterative method for solving general nonlinear equations using calculus, essentially giving the description above. In the same publication, Simpson also gives the generalization to systems of two equations and notes that Newton's method can be used for solving optimization problems by setting the gradient to zero.

Arthur Cayley in 1. The Newton- Fourier imaginary problem was the first to notice the difficulties in generalizing Newton's method to complex roots of polynomials with degree greater than 2 and complex initial values. This opened the way to the study of the theory of iterations of rational functions.

Newton's method is an extremely powerful technique. However, there are some difficulties with the method.

Difficulty in calculating derivative of a function. An analytical expression for the derivative may not be easily obtainable and could be expensive to evaluate. In these situations, it may be appropriate to approximate the derivative by using the slope of a line through two nearby points on the function.

Using this approximation would result in something like the secant method whose convergence is slower than that of Newton's method. Failure of the method to converge to the root. Specifically, one should review the assumptions made in the proof. For situations where the method fails to converge, it is because the assumptions made in this proof are not met. Overshoot. An example of a function with one root, for which the derivative is not well behaved in the neighborhood of the root, isf(x)=. For a = 1/2, the root will still be overshot, but the sequence will oscillate between two values. For 1/2 < a < 1, the root will still be overshot but the sequence will converge, and for a .

To overcome this problem one can often linearise the function that is being optimized using calculus, logs, differentials, or even using evolutionary algorithms, such as the Stochastic Funnel Algorithm. Good initial estimates lie close to the final globally optimal parameter estimate. In Nonlinear Regression the SSE equation is only . Initial estimates found here will allow the Newton- Raphson method to quickly converge.

It is only here that the Hessian of the SSE is positive and the first derivative of the SSE is close to zero. Mitigation of non- convergence. When there are two or more roots that are close together then it may take many iterations before the iterates get close enough to one of them for the quadratic convergence to be apparent. However, if the multiplicity m.

On the other hand, if the multiplicity m. If the second derivative is not 0 at . If the third derivative exists and is bounded in a neighborhood of . But there are also some results on global convergence: for instance, given a right neighborhood U+ of . Suppose this root is .

If the assumptions made in the proof of quadratic convergence are met, the method will converge. For the following subsections, failure of the method to converge indicates that the assumptions made in the proof were not met. Bad starting points. This can happen, for example, if the function whose root is sought approaches zero asymptotically as x goes to . In such cases a different method, such as bisection, should be used to obtain a better estimate for the zero to use as an initial point. Iteration point is stationary.

If we start iterating from the stationary pointx. Even if the derivative is small but not zero, the next iteration will be a far worse approximation. Starting point enters a cycle. The first iteration produces 1 and the second iteration returns to 0 so the sequence will alternate between the two without converging to a root. In fact, this 2- cycle is stable: there are neighborhoods around 0 and around 1 from which all points iterate asymptotically to the 2- cycle (and hence not to the root of the function). In general, the behavior of the sequence can be very complex (see Newton fractal).

The real solution of this equation is - 1. Derivative issues.

The cube root is continuous and infinitely differentiable, except for x = 0, where its derivative is undefined: f(x)=x. In the limiting case of . Consider the functionf(x)=. In these cases simpler methods converge just as quickly as Newton's method.

Zero derivative. So convergence is not quadratic, even though the function is infinitely differentiable everywhere. Similar problems occur even when the root is only . For example, letf(x)=x. This is less than the 2 times as many which would be required for quadratic convergence. So the convergence of Newton's method (in this case) is not quadratic, even though: the function is continuously differentiable everywhere; the derivative is not zero at the root; and f. Each zero has a basin of attraction in the complex plane, the set of all starting values that cause the method to converge to that particular zero. These sets can be mapped as in the image shown.

For many complex functions, the boundaries of the basins of attraction are fractals. In some cases there are regions in the complex plane which are not in any of these basins of attraction, meaning the iterates do not converge. In this case almost all real initial conditions lead to chaotic behavior, while some initial conditions iterate either to infinity or to repeating cycles of any finite length. Curt Mc. Mullen has shown that for any possible purely iterative algorithm similar to Newton's Method, the algorithm will diverge on some open regions of the complex plane when applied to some polynomial of degree d . However, Mc. Mullen gave a generally convergent algorithm for polynomials of degree d = 3.

In the formulation given above, one then has to left multiply with the inverse of the k- by- k. Jacobian matrix. JF(xn) instead of dividing by f '(xn). Rather than actually computing the inverse of this matrix, one can save time by solving the system of linear equations. JF(xn)(xn+1. If the nonlinear system has no solution, the method attempts to find a solution in the non- linear least squares sense. In this case the formulation is. Xn+1=Xn. A condition for existence of and convergence to a root is given by the Newton.

Because of the more stable behavior of addition and multiplication in the p- adic numbers compared to the real numbers (specifically, the unit ball in the p- adics is a ring), convergence in Hensel's lemma can be guaranteed under much simpler hypotheses than in the classical Newton's method on the real line. Newton- Fourier method. This guarantees that there is a unique root on this interval, call it . If it is concave down instead of concave up then replace f(x).

The derivative is zero at a minimum or maximum, so minima and maxima can be found by applying Newton's method to the derivative. The iteration becomes: xn+1=xn. Given the equationg(x)=h(x).

Newton's method is one of many methods of computing square roots. For example, if one wishes to find the square root of 6. With only a few iterations one can obtain a solution accurate to many decimal places. Solution of cos(x) = x. We can rephrase that as finding the zero of f(x) = cos(x) .

RSS Feed

RSS Feed